KEY TAKEAWAYS

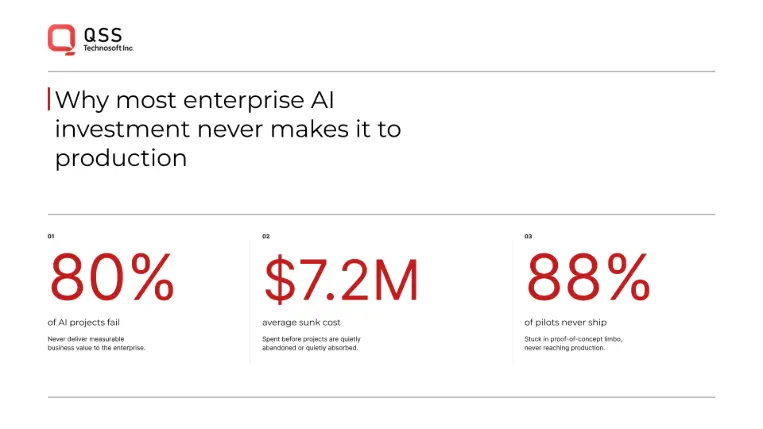

- 80% of enterprise AI projects fail to deliver intended business value; 88% of AI pilots never reach production

- Consulting firms optimize for strategy, not execution — they're paid by the engagement, not by outcome

- The strategy-to-execution gap costs enterprises $7.2M per failed initiative (average sunk cost in 2025)

- Production-focused teams prioritize ruthlessly — one model in 60-90 days, measure honestly, kill what doesn't work

- Only 25% of enterprise AI initiatives deliver expected ROI, and most take 2-4 years when they do

- Evaluation framework: Ask your partner 5 specific questions to determine if they ship production systems or sell frameworks

INTRODUCTION

Your CTO promised the CFO a $2M ROI from your data science initiative. Eighteen months in, you've got a well-architected strategy document, a vendor-approved roadmap, and three abandoned pilot projects. The strategy was sound. The execution was not.

This is the enterprise data science gap—and it's costing your organization millions in sunk costs, talent churn, and missed competitive advantage.

The numbers tell the story:

80% of enterprise AI projects fail to deliver intended business value (Pertama Partners, 2026)

88% of AI pilots never reach production, regardless of company size

42% of companies abandoned at least one AI initiative in 2025, with average sunk cost per abandoned initiative: $7.2M (Deloitte, 2025)

Enterprises invested $684B in AI in 2025, but $547B (80%) failed to deliver intended value

Most enterprise organizations face the same brutal choice: hire expensive consultants to build frameworks for data science maturity, or hire a scrappy startup to build models without thinking about scale. Neither option produces the systems that actually run in production and drive revenue. Strategy consultants deliver PowerPoint. Technology vendors deliver tools. What enterprises need—and rarely find—is production-grade data science architecture combined with business acumen.

This essay breaks down what production data science actually requires, why most enterprises get it wrong, and how to evaluate whether your partner actually ships production systems or just sells strategy.

THE HIDDEN COST OF STRATEGY WITHOUT IMPLEMENTATION

Here's what typically happens:

A Fortune 500 enterprise engages a top-tier consulting firm to design their "AI transformation roadmap." Over three months, a team of MBAs and junior engineers produces a 200-slide deck covering:

- AI governance frameworks

- Skills assessments for the data team

- A phased multi-year roadmap

- Vendor evaluation matrices

- "Organizational readiness" assessments

- It's thorough. It's defensible in budget meetings. It costs $500K-$1.5M.

Then the real work begins. Your team tries to execute the roadmap, and immediately discovers the gap: the consultants were paid to think about data science, not to build it. There's no guidance on:

How to actually structure the ML pipeline so it doesn't break in production

What "real" governance means when you're shipping three models a week

How to handle the inevitable retraining failures, data drift, and model degradation

Why the vendor you selected works fine in demos but chokes at scale

How to measure ROI in a way that doesn't require a PhD in statistics

Your team is stuck translating abstract principles into concrete infrastructure. Some projects move forward. Most don't. Eighteen months later, you've spent $3M-$5M (strategy + failed pilots + salaries) and have 1-2 models in production that might not even move the business needle.

This is the default path for enterprises hiring traditional consulting firms.

The alternative—hiring a small ML engineering firm to "just build stuff"—comes with its own problems. Those firms ship models, but not systems. They optimize for technical elegance, not business outcomes. When they leave, nobody knows how to maintain the code. The technical debt accrues. The models degrade. Three years later, you're hiring another firm to "modernize" your infrastructure.

Both paths are expensive. Both paths are slow. Both paths prioritize the wrong metric: strategy or technical purity instead of production impact.

WHAT PRODUCTION DATA SCIENCE ACTUALLY REQUIRES

Production data science isn't a checkbox on a consulting roadmap. It's a discipline.

The enterprises that successfully run data science at scale share a common architecture—not in terms of specific tools (they might use Kubernetes or serverless, Snowflake or BigQuery), but in terms of principles:

1. Ruthless Prioritization

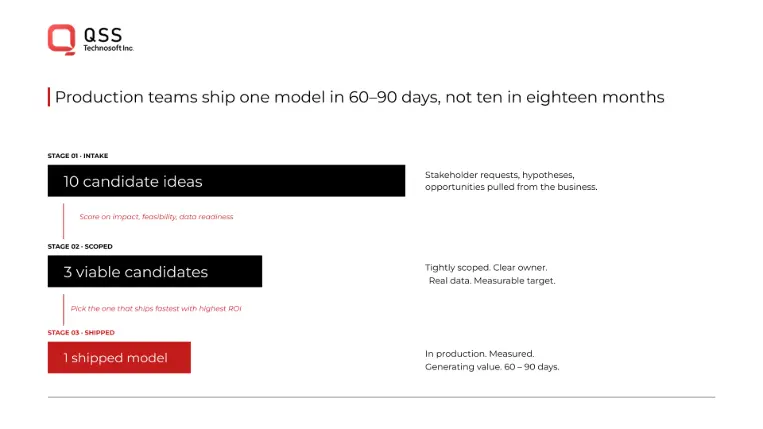

You cannot build everything. Consultants and vendors want you to think big ("build a modern data lake, implement AI governance, upskill 500 people"). Real production shops prioritize one outcome: Which single model, shipped in the next 60-90 days, moves the revenue needle by the most?

Enterprise executives don't care about model accuracy on a holdout set. They care about whether the system works, what it costs to run, and whether it actually changes customer behavior or internal efficiency. Every model should answer a specific business question. If you can't explain the ROI in one sentence, you don't build it.

This sounds obvious. Most enterprises violate this rule. They build a "data science center of excellence" and tasked it with "exploring AI opportunities." Exploration is strategy. Production is shipping the model that directly impacts revenue.

2. Scalable Infrastructure, Not Gold-Plated Tools

Large enterprises often over-index on tooling. They buy vendor platforms (Databricks, DataRobot, etc.) and assume that owning the right tool solves the problem. It doesn't.

The enterprises that run successful production systems share a different philosophy: Prefer boring infrastructure to flashy platforms.

A production data science system needs:

A reliable data pipeline that doesn't mysteriously break at 2 AM

A way to version and reproduce models (git + artifact storage, nothing fancy)

Monitoring and alerting for model degradation (not complex; just honest)

A process for retraining and deploying models without manual intervention

Clear ownership and runbooks so one person leaving doesn't break everything

These aren't novel. They're boring. But they're non-negotiable. Most consulting firms won't recommend them because they don't sell vendor relationships.

3. Honest Measurement of What Works

Here's where production data science departments diverge from consulting shops: they're ruthless about killing things that don't work.

A model shipped to production should have:

A clear success metric (not "model accuracy"—something your business cares about)

A measurement window (does it change behavior in 30, 60, or 90 days?)

A kill threshold (if the metric doesn't move by X%, we sunset it)

Most consulting frameworks talk about "measuring ROI" in a footnote. Production teams center on it. If a model doesn't drive measurable business value within a defined window, you deprecate it and redeploy resources to the next priority.

This is uncomfortable for organizations trained on "build it and it will create value eventually." It's also how you avoid the sunk-cost trap of maintaining technical debt from last year's "strategic initiative."

Why this matters: Only 25% of enterprise AI initiatives deliver expected ROI (IBM CEO Study, 2025). The difference isn't smarter people. It's measurement discipline.

4. Cross-Functional Ownership

The data science team doesn't own the outcome. The business owner does.

Consultants often recommend "appointing a Chief AI Officer" or "establishing a data science center of excellence." These structures create silos. The reality of production data science is that the model exists to solve a specific business problem. The business owner needs to care enough to measure it, iterate on it, and drive adoption.

In organizations that succeed:

- The data scientist writes the code and owns quality

- The product/business owner owns the metric and drives adoption

- The engineer owns the infrastructure and uptime

- The executive owns the budget and prioritization

Each person has skin in the game. When the model doesn't work, blame is distributed and solutions are faster. When it does work, the wins get celebrated across the org, not hoarded by the data team.

5. Team Composition Matters More Than Team Size

A common consulting recommendation: "Build a team of 15 data scientists, 10 ML engineers, and 5 data architects."

The enterprises that actually ship have smaller, harder-working teams. A typical winning composition:

- 3-5 data scientists (truly talented, opinionated, shipping-focused)

- 2-3 ML engineers (infrastructure obsessed, bored by theory)

- 1 product/analytics person (translating business to technical)

- Fractional: cloud architects, security, governance (as-needed, not full-time)

- This isn't a team that publishes papers. It's a team that ships models that work.

WHY ENTERPRISES GET THIS WRONG

Two systemic reasons:

First, consultants have perverse incentives.

They're paid by the project, not by the outcome. A $1M engagement that produces three deployed models and $10M in ROI is the same revenue as a $1M engagement that produces 50 slide decks and zero models. So consultants optimize for the appearance of progress: frameworks, governance documents, org charts.

Second, it's emotionally harder to hold a partner accountable for business outcomes

than for deliverables. It's easy to say: "Did they deliver the roadmap?" It's hard to say: "Did the roadmap actually result in better decisions?" So executives default to checking boxes.

Empower Your Digital Vision with an Award-Winning Tech Partner

QSS Technosoft is globally recognized for innovation, excellence, and trusted delivery.

- Clutch Leader in App Development 2019

- Ranked Among Top 100 Global IT Companies

- Honored for Cutting-edge AI & Mobility Solutions

HOW TO EVALUATE REAL PRODUCTION EXPERTISE

When you're evaluating a data science partner, ask these 5 questions:

1. Show me models in production. What's the ROI of each?

Real teams have numbers. Consulting shops have case studies that mention "significant" or "measurable" ROI without the actual figure.

2. Walk me through a failure. What model shipped and didn't work?

Production teams kill things. Consulting teams rebrand them as "learning opportunities."

3. Who owns the code after you leave?

If the answer is "your team, with docs," they think about sustainability. If the answer is "your platform automatically retrains the model," they're still selling you tools.

4. What's your infrastructure stack?

If they name three vendors before naming their actual architecture, they're selling vendor relationships.

5. How do you measure success in the first 90 days?

Production teams have a specific metric and a kill threshold. Consulting teams talk about "maturity" or "readiness."

HOW QSS APPROACHES ENTERPRISE DATA SCIENCE

The enterprises that win aren't looking for another consulting firm or another tool vendor. They're looking for a partner who understands both the strategy and the production realities—and who has the discipline to execute both.

That's how QSS works.

We don't deliver roadmaps and disappear. We work with CTOs and data leaders to identify the single highest-impact model, build the infrastructure to run it at scale, measure it honestly, and then iterate. In our first 90 days, you'll see:

- One production model deployed that addresses a specific business metric

- Infrastructure in place for ongoing retraining, monitoring, and iteration

- Clear measurement of whether the model moves the needle—and a kill threshold if it doesn't

- Your team trained and equipped to maintain and evolve the system independently

We prioritize ruthlessly. We measure honestly. We build boring, reliable infrastructure. We focus on business outcomes, not complexity. And we transfer knowledge so your team owns the code and the outcome when we're done.

Unlike consulting firms, our success is tied to your success. If the model doesn't move the business, we iterate until it does. If the infrastructure isn't production-grade, that's on us. If your team doesn't own the system, we haven't done our job.

The enterprises we work with move from "stuck in consulting purgatory" to "shipping production models that drive revenue" in 4-6 months. That's not because we're smarter than consulting firms. It's because we're held to a different standard: business outcomes, not frameworks. Production systems, not roadmaps.

THE PATH FORWARD

Enterprise data science doesn't fail because of bad strategy or missing tools. It fails because organizations optimize for the wrong things: complexity over impact, frameworks over execution, team size over team quality.

The enterprises winning at data science in 2026 have one thing in common: they've stopped treating it like a consulting engagement and started treating it like a business unit. They measure it by production models shipped, revenue impact delivered, and technical debt avoided.

The strategy still matters. But strategy without production expertise is just expensive PowerPoint. And a team that ships without strategy is just expensive firefighting.

What enterprises need is both: sound strategy grounded in production reality, and execution discipline grounded in business outcomes.

That's the difference between data science that costs millions and data science that makes millions.

FREQUENTLY ASKED QUESTIONS

1: How long does it typically take to go from strategy to a production model?

A: If you're working with a production-focused partner, 60-90 days for your first model. If you're working with traditional consulting, 12-18 months to even begin implementation. The difference is ruthless prioritization (one model) vs. building frameworks (everything at once).

2: What's the typical ROI timeline for enterprise data science?

A: Production teams aim for ROI signals within 90 days. Enterprise-wide, you're looking at 2-4 years for mature programs, which is 3-4x longer than conventional tech deployments. (IBM/Deloitte, 2025) The key: measure continuously and kill projects that don't show signals within the measurement window.

3: Should we hire a consulting firm for the roadmap, then hire engineers for execution?

A: Rarely a good idea. Most consulting roadmaps aren't executable—they're written by people who've never had to maintain infrastructure at scale. A better approach: hire a partner who does both strategy and execution, adapting the strategy as you learn from building. Partners like QSS—who understand production realities and can course-correct in real-time—outpace traditional consulting because they ship while they strategize instead of handing you a theoretical plan.

4: How do we know if we're in the 25% that deliver ROI or the 75% that don't?

A: You measure. Specifically: Define one business metric, ship one model, measure for 90 days. Did it move the metric by X%? If yes, expand. If no, kill it and try again. This is more important than any strategy document.

5: Why do so many models fail to reach production?

A: 88% of AI pilots never reach production because the infrastructure isn't built to scale them. Pilots are built in notebooks. Production requires pipelines, monitoring, and runbooks. Most teams don't invest in production infrastructure until after pilots succeed, which means they're scrambling to rebuild everything.

6: What's the difference between a data scientist who ships and a data scientist who theorizes?

A: Shippers care about business outcomes. Theorists care about model accuracy. Shippers kill things fast. Theorists tinker endlessly. When hiring, look for people who've shipped to production in previous roles—that experience is fundamental.

7: Is it really necessary to prioritize ruthlessly, or is building a portfolio of models better?

A: Ruthless prioritization is non-negotiable in year 1. Build one model that moves the needle, prove ROI, then add to the portfolio. Building a portfolio of mediocre models that don't move the needle is how you blow budgets and kill executive confidence.

8: What should we do with the consulting roadmap we already paid for?

A: Use it as a reference, not a scripture. Treat it as one perspective. Your first 90 days should focus on identifying the one model that delivers the most ROI, not executing the roadmap in order. Adjust the roadmap as you learn from building.

9: How do we avoid the trap of hiring a partner who ships chaotically (no governance)?

A: Ask for infrastructure details. Do they have versioning? Monitoring? Retraining automation? Do they have runbooks for handoff? These signal whether they've shipped at scale or just built one-off models. QSS, for example, prioritizes boring infrastructure—versioning, monitoring, automated retraining, clear runbooks for handoff—because that's what production systems actually need. If a partner is excited about flashy tools instead of infrastructure discipline, that's a red flag.

10: Is data quality really the main blocker, or is it just an excuse?

A: 85% of failed AI projects cite poor data quality as a root cause, and only 12% of organizations have data of sufficient quality to support AI applications. (Gartner, 2025) So yes, it's real. But the solution isn't a multi-year data cleansing project—it's working with data as-is and improving incrementally as you ship models.

The Enterprise Data Science Gap: Why Strategy Without Production Expertise Costs Millions