Key Takeaways

- With over 2 million units already sold, Meta AI smart glasses are moving from concept to real-world adoption faster than expected.

- Beyond basic features, these glasses include hidden AI capabilities like scene understanding, real-time translation, and memory recall.

- Thanks to technologies like computer vision and multimodal AI, the glasses can understand what you see and respond accordingly.

- From “Be My Eyes” accessibility support to a built-in teleprompter and silent AI interaction, the real value lies in what’s not obvious.

- Whether it’s travel, content creation, accessibility, or daily productivity, these glasses are becoming practical tools not just futuristic gadgets.

- Areas like privacy, battery life, and social comfort are improving as the technology evolves.

- Just like smartphones once did, AI glasses may redefine how we interact with technology making it more natural, hands-free, and always available.

- Companies exploring AI-powered wearables early can build innovative, future-ready products in a fast-growing space.

Introduction

For years, smart glasses have been talked about as the next big thing in technology, but most attempts struggled to gain real traction. That conversation is starting to change largely because of Meta’s Ray-Ban AI glasses. After spending billions on its metaverse ambitions, Meta is now focusing heavily on AI-powered wearables, and early signs suggest this strategy may be paying off. Since the launch of the second-generation Ray-Ban Meta glasses in 2023, the company has already sold more than 2 million units, showing that interest in AI-enabled eyewear is growing quickly.

Industry experts believe this momentum could accelerate soon. Futurist Sinead Bovell recently suggested that 2026 may be the year AI glasses begin moving into the mainstream, like how products like smartphones and smartwatches slowly gained acceptance before becoming everyday devices. Meta itself is preparing for this shift, planning to significantly scale production in the coming years.

While most people recognize these glasses for obvious features like photos, video recording, or music playback, the reality is far more interesting. Behind the scenes, Meta has integrated powerful AI capabilities that many users still haven’t discovered.

What Are Meta AI Glasses?

Meta AI glasses are smart eyewear developed through a partnership between Meta and Ray-Ban, designed to combine everyday style with built-in artificial intelligence. At first glance they look like regular glasses, but the frames include powerful technology that allows users to interact with the digital world hands-free.

These glasses are designed to make everyday tasks easier while keeping your phone in your pocket.

Key capabilities include:

- Built-in Camera – Capture photos and short videos directly from your point of view.

- Open-Ear Speakers – Listen to music, podcasts, or calls without covering your ears.

- Multiple Microphones – Enable voice commands, clear calls, and interaction with Meta AI.

- Meta AI Voice Assistant – Ask questions, get information, or control features using simple voice prompts like “Hey Meta.”

- Phone Connectivity – Connect with your smartphone to share content, stream audio, or send messages.

In simple terms, Meta AI glasses turn regular eyewear into a hands-free smart device that helps you capture moments, access information, and interact with AI while on the go.

Why AI Smart Glasses Are the Next Big Thing

Technology has been gradually moving closer to the human body from desktops to smartphones, then to smartwatches and earbuds. AI smart glasses are the next step in that evolution. Instead of constantly pulling out a phone, users can interact with technology directly through something they already wear every day.

What makes these glasses different is that they combine AI assistance with real-world awareness, creating a much more natural way to access information.

Here are a few reasons why AI smart glasses are gaining so much attention:

- True Hands-Free Computing

With voice commands and AI assistance, users can capture photos, send messages, or get information without touching a screen. - AI That Understands What You See

Thanks to computer vision, AI glasses can analyze objects, text, or surroundings and provide helpful information instantly. - A More Natural Way to Use Technology

Instead of looking down at a phone, users interact with technology while still engaging with the real world. - Always-Available Personal AI Assistant

The glasses act like a wearable AI companion that can answer questions, translate languages, or help with everyday tasks. - Content Creation from Your Perspective

Creators can record videos exactly from their point of view, something traditional cameras or phones cannot easily replicate.

These capabilities are why many tech experts believe AI smart glasses could eventually become one of the most important wearable technologies of the next decade.

Hidden Capabilities of Meta AI Glasses Most People Don’t Know

At first glance, Meta smart glasses may look stylish everyday eyewear with a camera and speakers. But beneath the surface, they pack several intelligent features powered by AI and computer vision. Many users only explore the basic functions, while some of the most interesting capabilities remain largely unnoticed.

Here are some hidden features that make Meta AI smart glasses much more powerful than they appear.

AI Scene Understanding

One of the most fascinating abilities of Meta wearable AI glasses is their ability to understand what you’re looking at. By using the built-in camera and computer vision, the AI can analyze objects, landmarks, or text in your surroundings and provide helpful information. This makes the glasses feel less like a gadget and more like a real-time assistant that understands your environment.

Real-Time Language Translation

The glasses can help bridge language barriers. With the help of AI, Meta Ray-Ban smart glasses can translate conversations in real time and deliver the translated audio through the open-ear speakers. This feature is particularly useful when traveling or communicating with people who speak different languages.

AI Memory Feature

Another interesting capability is the ability to “remember” things. You can ask for the glasses to remember an object, location, or item you are looking at. Later, you can ask Meta AI about it, and the system will recall that visual information.

QR Code and Text Scanning

Instead of opening your phone camera, the glasses can scan QR codes or read text directly through the built-in camera. This allows users to quickly access links, menus, or information simply by looking at them.

“Be My Eyes” Accessibility Support

Meta has also integrated support for the Be My Eyes service. By saying “Hey Meta, be my eyes,” users can connect with sighted volunteers who can see through the glasses’ camera feed and help identify objects or guide them through their surroundings. For visually impaired users, this feature can be incredibly helpful in daily life.

Silent AI Interaction with Neural Band

Meta is experimenting with a Neural Band, a wearable wrist device that detects small muscle signals from the hand. This allows users to control the glasses or interact with AI without speaking simply by making subtle gestures.

AI Highlight Detection

When recording videos, the AI can analyze the footage and identify key or interesting moments automatically. This helps users capture highlights without manually reviewing long recordings later.

Hands-Free Messaging

With simple voice commands, users can send messages through apps like WhatsApp or Messenger without touching their phone. The AI converts speech into text and sends it instantly.

Live Video Sharing

Another useful feature is the ability to stream what you see during video calls. This allows friends, family, or colleagues to view your perspective in real time, which can be helpful for collaboration or remote assistance.

Teleprompter Built into the Glasses

A newer experimental feature allows text or notes to appear discreetly inside the glasses. This works like a built-in teleprompter, helping creators, presenters, or speakers read scripts without looking down at a phone or paper. It’s especially useful for recording content, giving presentations, or remembering key talking points.

Together, these capabilities show that Meta AI smart glasses are evolving into more than just wearable cameras; they are becoming intelligent assistants that interact with the real world around you.

Empower Your Digital Vision with an Award-Winning Tech Partner

QSS Technosoft is globally recognized for innovation, excellence, and trusted delivery.

- Clutch Leader in App Development 2019

- Ranked Among Top 100 Global IT Companies

- Honored for Cutting-edge AI & Mobility Solutions

The Technology Behind Meta AI Glasses

What makes Meta AI smart glasses impressive isn’t just the hardware; it’s the combination of several advanced technologies working together behind the scenes. These technologies allow the glasses to understand the environment, process voice commands, and deliver useful information in real time. While the device looks simple on the outside, the system powering it is quite sophisticated.

Here are some of the key technologies that make Meta Ray-Ban smart glasses possible:

- Computer Vision

Computer vision allows the glasses to analyze what the user is looking at through the built-in camera. This technology helps identify objects, read text, recognize landmarks, and support features like scene understanding or QR code scanning. - Multimodal AI

The AI used in Meta wearable AI glasses is multimodal, meaning it can process multiple types of inputs at the same time such as voice, images, and context. This allows the system to understand a spoken question while also analyzing what the camera is seeing. - Speech Recognition

Advanced speech recognition enables users to interact with the glasses using simple voice commands like “Hey Meta.” The system converts spoken language into commands or queries that the AI assistant can process instantly. - Edge AI Processing

Many tasks are processed directly on the device or nearby systems rather than relying entirely on cloud servers. This edge processing reduces latency and helps deliver faster responses for real-time interactions. - Wearable Hardware Sensors

The glasses include multiple microphones, cameras, speakers, and motion sensors. These components work together to capture audio, track user interactions, and provide a seamless, wearable experience.

Together, these technologies turn Meta smart glasses into more than just connected eyewear. They function as a compact AI system designed to assist users in everyday situations.

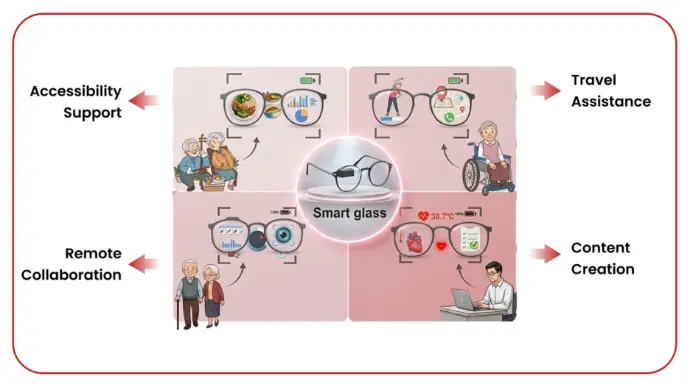

Real-World Use Cases of Meta AI Glasses

While Meta AI smart glasses sound futuristic, their real value shows up in everyday situations. They’re designed to make simple tasks easier, faster, and more convenient without constantly reaching for a phone.

Here are some practical ways people are already using them:

- Travel Assistance

When visiting a new place, the glasses can help translate conversations, read signs, or provide quick information about landmarks. This makes exploring new cities much easier. - Content Creation

Creators can record photos and videos directly from their point of view using Meta Ray-Ban smart glasses. This makes it easier to capture authentic moments while walking, traveling, or attending events. - Accessibility Support

With features like “Be My Eyes,” visually impaired users can connect with volunteers who help describe objects or surroundings through the glasses’ camera. - Remote Collaboration

Users can share their live perspective during video calls, allowing colleagues or friends to see exactly what they’re seeing. This can be useful for troubleshooting, guidance, or teamwork. - Daily Productivity

Simple tasks like sending messages, asking questions, setting reminders, or listening to audio can be done through voice commands using Meta smart glasses, helping users stay connected while keeping their hands free.

These examples show how Meta wearable AI glasses are gradually becoming practical tools for both everyday life and professional use.

Challenges and Concerns Around AI Smart Glasses

Like any emerging technology, AI smart glasses also come up with a few challenges. While devices like Meta Ray-Ban smart glasses are opening new possibilities in wearable tech, there are still some areas that companies and users are continuing to evaluate and improve.

Here are a few considerations often discussed around this technology:

- Privacy Awareness

Since Meta AI smart glasses include cameras and microphones, some people may initially feel cautious about being recorded. To address this, Meta has added visible LED indicators that light up when the camera is active. - Data and Security Considerations

Because these glasses interact with AI systems and cloud services, protecting user data is important. Tech companies are continuously improving encryption and privacy controls to keep information secure. - Social Comfort

As with many new technologies, it can take time for people to become comfortable with wearable devices that include cameras or AI assistants. - Battery Life Improvements

Since the glasses pack multiple features into a compact frame, battery capacity is still evolving as manufacturers work to balance performance with size and comfort.

Overall, these challenges are part of the natural evolution of new technology, and companies are actively working to refine the experience as AI wearables become more common.

The Future of AI Smart Glasses

AI smart glasses are still evolving, but many experts believe they could become the next major computing device, like how smartphones changed personal technology. Devices like Meta AI smart glasses are already showing how AI can move from screens into everyday wearables.

Here are a few developments shaping the future of this technology:

- Augmented Reality Experiences

Future versions of Meta smart glasses may include lightweight AR displays that show directions, notifications, or helpful information directly in your field of view. - Smarter Context-Aware AI

AI assistants may eventually understand your surroundings and provide helpful suggestions without needing constant voice commands. - Gesture and Neural Controls

Technologies like wristbands or gesture tracking could allow users to control Meta wearable AI glasses through subtle hand movements instead of touch. - Real-Time Information Layer

Imagine walking through a city and instantly seeing restaurant ratings, translations, or landmark details through Meta Ray-Ban smart glasses.

As this technology improves, AI glasses could gradually reduce our dependence on smartphones and create a more natural, hands-free way to interact with digital information.

How QSS Technosoft Can Help Build AI-Powered Smart Devices

The rapid rise of devices like Meta AI smart glasses shows that the future of technology is moving toward AI-powered wearables and smart devices. For businesses looking to enter this space, building such products requires expertise across AI, hardware integration, and scalable software platforms. This is where the right technology partner becomes critical.

QSS Technosoft helps companies design and develop intelligent digital solutions that power next-generation devices and applications.

Here’s how QSS can support businesses in this space:

- AI-Powered Wearable Applications

Building smart applications that work seamlessly with wearable devices such as Meta wearable AI glasses, smart headsets, and IoT-based wearables. - Computer Vision Solutions

Developing AI systems that can analyze images, recognize objects, read text, or understand real-world environments like the capabilities used in Meta Ray-Ban smart glasses. - Smart Device Software Development

Creating custom software that connects hardware devices, mobile apps, and cloud platforms into one unified ecosystem. - AI Integration

Integrating advanced AI capabilities like voice assistants, real-time analytics, and contextual intelligence into digital products. - Cloud-Powered AI Platforms

Building scalable cloud infrastructure that supports AI processing, data management, and real-time device connectivity.

With strong expertise in AI, cloud, and advanced software development, QSS Technosoft helps businesses turn innovative ideas into practical, market-ready smart technology solutions.

Conclusion

AI glasses are quickly moving from experimental gadgets to the next step in human–AI interaction. Devices like Meta AI smart glasses show how technology is shifting from handheld screens to hands-free, real-world experiences powered by artificial intelligence. As these wearables become smarter with computer vision, voice AI, and contextual awareness, they could eventually reshape how people access information, create content, and interact with digital systems.

For businesses, this shift also opens new opportunities. Companies that start exploring AI-powered wearables and smart device platforms early can gain a strong advantage as this technology becomes more mainstream.

If you're planning to build AI-powered wearable solutions or smart device platforms, QSS Technosoft can help bring your idea to life. With expertise in AI, cloud, and smart application development, the team can help turn innovative concepts into scalable, real-world products.

Hidden Capabilities of Meta AI Glasses Most People Don’t Know